AI Data Center Boom Strains US Resources

Saturday, February 28th, 2026 - The rapid proliferation of Artificial Intelligence (AI) is no longer a future prediction; it's a present-day reality profoundly reshaping the technological landscape - and straining the nation's resources in the process. The engine driving this revolution is the data center, and the United States is at the epicenter of a construction boom struggling to keep pace with the exponential growth in demand. While AI promises transformative benefits across numerous sectors, its energy-intensive nature presents a significant challenge that demands immediate and innovative solutions.

From Boom to Potential Bust: The Scale of the Problem

The initial surge in AI development, documented extensively since 2023, has blossomed into a full-scale infrastructural crisis. The US has firmly established itself as a global leader in AI research, development, and deployment, attracting billions in investment and fostering a highly competitive talent pool. However, this leadership comes at a cost. The computational power required to train sophisticated AI models - particularly large language models (LLMs) like those powering advanced chatbots and complex analytical tools - is astronomical. Each iteration of model improvement necessitates massive processing capacity, demanding ever-larger and more powerful data centers.

The problem isn't simply about more data centers; it's about the type of infrastructure required. Traditional data centers, designed for general-purpose computing, are ill-equipped to handle the specialized workloads of AI. Modern AI data centers demand high-performance computing (HPC) hardware, including specialized GPUs and TPUs, along with advanced networking and storage solutions. This specialized hardware, while exponentially more powerful, also demands significantly more energy.

A Thirst for Power and Water: The Environmental Cost

The energy consumption of these facilities is reaching critical levels. Recent reports indicate that a single, large-scale AI data center can consume as much electricity as a small city, and this figure is only expected to increase as AI models become more complex. The carbon footprint associated with this energy consumption is a major environmental concern, particularly given that a substantial portion of the US energy grid still relies on fossil fuels. While investments in renewable energy are growing, they are not yet sufficient to offset the increasing demand from the AI sector. Furthermore, the water requirements for cooling these massive server farms are substantial, placing additional stress on already strained water resources, especially in arid regions.

The Geographic Scramble: Locating the Future of AI The race to build new data centers is driving a geographic scramble for suitable locations. Developers are prioritizing areas with access to cheap electricity - often renewable sources like hydroelectric power - and abundant water supplies. This has led to a concentration of data center construction in specific regions, such as the Pacific Northwest, the Southeast, and parts of the Midwest. However, this concentration is creating localized resource pressures, raising concerns about water scarcity, grid instability, and potential conflicts with other industries and communities.

Innovation as a Necessity: Pathways to Sustainability The long-term sustainability of AI hinges on our ability to mitigate the environmental impact of data centers. Several innovative approaches are being explored, including:

- Advanced Cooling Technologies: Traditional air-cooling systems are highly inefficient. Liquid cooling, direct-to-chip cooling, and even immersion cooling - where servers are submerged in a dielectric fluid - offer significantly improved cooling efficiency, reducing both energy and water consumption.

- Renewable Energy Integration: Powering data centers with 100% renewable energy is the ultimate goal. This requires significant investments in solar, wind, and other renewable energy sources, as well as improved energy storage technologies.

- Hardware Optimization: Developing more energy-efficient hardware is crucial. Researchers are exploring new chip architectures, materials, and manufacturing processes to reduce power consumption without sacrificing performance.

- Data Center Design: Optimizing data center layouts and airflow patterns can improve cooling efficiency and reduce energy waste. Modular data center designs, which allow for scalable and flexible expansion, are also gaining popularity.

- AI-Powered Energy Management: Utilizing AI to optimize energy usage within data centers themselves, predicting demand and dynamically adjusting power distribution, can yield substantial savings.

The Road Ahead: Balancing Progress and Responsibility

The AI data center boom is a defining trend of the 2020s, and its impact will continue to shape the future of technology and energy consumption. Addressing the resource constraints and environmental challenges requires a concerted effort from governments, industry leaders, and researchers. Without a commitment to sustainable practices, the benefits of AI could be overshadowed by its unintended consequences. The next few years will be critical in determining whether we can harness the power of AI responsibly and build a future where innovation and sustainability go hand in hand.

Read the Full Interesting Engineering Article at:

https://interestingengineering.com/ai-robotics/us-ai-data-centers-power-facility

on: Thu, Feb 26th

by: Investopedia

Nvidia Stock Disconnect: Strong Business, Moderate Performance

on: Tue, Feb 24th

by: inforum

Michigan Business Leaders Navigate Headwinds & Opportunities

on: Fri, Feb 20th

by: whitehouse.gov

on: Thu, Feb 19th

by: moneycontrol.com

on: Thu, Feb 19th

by: Fortune

on: Tue, Feb 17th

by: Investopedia

on: Thu, Feb 12th

by: Seeking Alpha

Google Unveils Gemini 3 'Deep Think' for Science & Engineering

on: Mon, Feb 02nd

by: Investopedia

on: Tue, Jan 27th

by: The Motley Fool

on: Sun, Jan 18th

by: Seeking Alpha

BlackRock Science & Technology Trust: AI Investment Opportunity

on: Sat, Jan 03rd

by: Science Daily

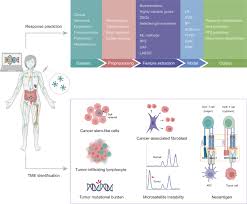

AI-Powered 'Neo-Tumor Profiling' Shows Promise in Predicting Immunotherapy Response

on: Thu, Dec 11th 2025

by: gizmodo.com

China Unveils 34,175-mile AI Super-Network: A Nationwide Cloud Supercomputer